🧪 A/B Tests That Separate Target Audience from Buyer Persona (No Guessing)

🧪 A/B Tests That Separate Target Audience from Buyer Persona (No Guessing)

When results drop, our brain wants to change everything.

New headline. New offer. New audience. New page. New ad.

All at once.

That feels busy.

But it creates one big problem:

We don’t know what worked.

So we do the opposite.

We run A/B tests that separate two jobs:

Target audience = who we aim at (WHO/WHERE)

Buyer persona = what we say (WHAT/WHY)

When we keep those jobs clean, our tests stop lying. Our consumer behavior signals get clearer. And we get faster wins inside The Buyer Clarity System™.

Test target audience variables (who/where) and buyer persona variables (what/why) in separate tests. Change one thing per week. Track the right metrics for each layer: CTR for targeting, bounce/time/CTA clicks for messaging, and conversion/close rate for truth.

🧠 Why most A/B tests fail (and waste money)

Most “A/B tests” are really “A/B/C/D chaos.”

We change:

the audience

the headline

the offer

the page layout

the CTA

Then we say: “Variant B won!”

But we don’t know why.

That’s not testing. That’s guessing with extra steps.

A clean A/B test is simple:

one change → one week → one winner

That’s it.

🎯 The big split: Target audience vs buyer persona variables

Here’s the difference in plain words.

🧭 Target audience variables = WHO/WHERE

These are targeting choices like:

location

job title

industry

interests

audience list / retarget group

channel (search vs social)

placement (feed vs stories)

device (mobile vs desktop)

They answer: Who sees the message?

🗣️ Buyer persona variables = WHAT/WHY

These are message choices like:

pain vs dream headline

proof-first vs steps-first

persona objections in FAQ

“for/not-for” box

CTA wording (guide vs trial vs call)

48-hour win promise

proof type (reviews vs case study)

They answer: What makes them act?

Both matter.

But they must be tested separately.

🧩 The clean testing ladder (the order that makes tests honest)

To rank and convert, we test in this order:

Target audience fit (WHO/WHERE)

Buyer persona fit (WHAT/WHY)

Page friction (forms, speed, mobile)

If we test copy before targeting is right, we blame the wrong thing.

Pick the lake first (target audience).

Then pick the fish (buyer persona).

🧭 Target audience tests: What to test (WHO/WHERE)

Target audience tests are about aim.

We test things like:

🧭 Audience slice tests (WHO)

Audience A: “busy parents”

Audience B: “new lifters”

Audience C: “event-ready urgent”

Or B2B:

Audience A: ops leads

Audience B: finance leads

Audience C: owners

🗺️ Location tests (WHO)

city vs nearby cities

radius 5 miles vs 15 miles

state vs state

📣 Channel tests (WHERE)

search vs social

YouTube vs Instagram

email vs retargeting

📍 Placement tests (WHERE)

feeds vs stories vs reels

desktop vs mobile

Rule: When testing target audience variables, keep the message the same.

Same headline. Same offer. Same landing page.

Only change the audience or channel.

🗣️ Buyer persona tests: What to test (WHAT/WHY)

Buyer persona tests are about meaning.

We test:

🩹 Pain vs 🌈 Dream vs 🧾 Proof headlines

Pain: “Stop [pain]…”

Dream: “Get [dream]…”

Proof: “People like you got [result]…”

🧱 Proof placement tests

proof near CTA vs proof lower

proof near pricing vs proof only in footer

❓ Objection micro-FAQ tests

micro-FAQ under CTA vs no micro-FAQ

FAQ order: top doubt first vs last

🔘 CTA step-size tests

“Get the complimentary guide” vs “Book a call”

“Start trial” vs “Request demo”

⏱️ 48-hour win tests

with 48-hour win block vs without

different 48-hour win wording

Rule: When testing buyer persona variables, keep the audience the same.

Same target audience. Same channel. Same budget.

Only change the message block.

🧪 The one-change-one-week rule (non-negotiable)

We use one simple testing rhythm:

Change one thing

Run one week

Pick a winner

Log the result

Stack wins

Why one week?

Because buyer behavior changes by day. Weekdays vs weekends. Paydays. Work schedules.

A week gives cleaner truth.

📊 Metrics: Which numbers matter for each layer

Most teams track the wrong numbers.

Here’s the simple map:

🎯 Metrics for target audience tests (WHO/WHERE)

These tell us if we aimed at the right group.

CTR (do they care enough to click?)

CPC (how expensive is attention?)

Landing page bounce (were they the right people?)

Cost per click-to-CTA (optional)

Best “truth signal” for target audience: CTR + bounce together.

low CTR = wrong people or wrong hook

high bounce = wrong people or mismatched promise

🧠 Metrics for buyer persona tests (WHAT/WHY)

These tell us if the message fits the brain.

Time on page (do they trust?)

CTA clicks (does the next step feel safe?)

Scroll depth (optional)

Conversion rate (did they take the step?)

Reply rate (if email)

Best “truth signal” for buyer persona: CTA clicks + conversion.

💰 Metrics for final truth (sales)

These tell us if we’re attracting buyers, not just clicks.

Lead quality (do they fit?)

Close rate (do they buy?)

Refunds/churn (did we set the right promise?)

Velocity (how fast from first click to yes?)

Best “truth signal” overall: conversion + close + refunds.

🧯 The biggest testing mistake: mixing WHO and WHAT in one test

Here’s a classic failure:

We change the audience AND the headline.

Result: “Variant B won.”

But why?

Was it the audience?

Was it the headline?

Was it the placement?

Was it the day of week?

We can’t learn. So we can’t grow.

Clean tests create learning. Learning creates wins.

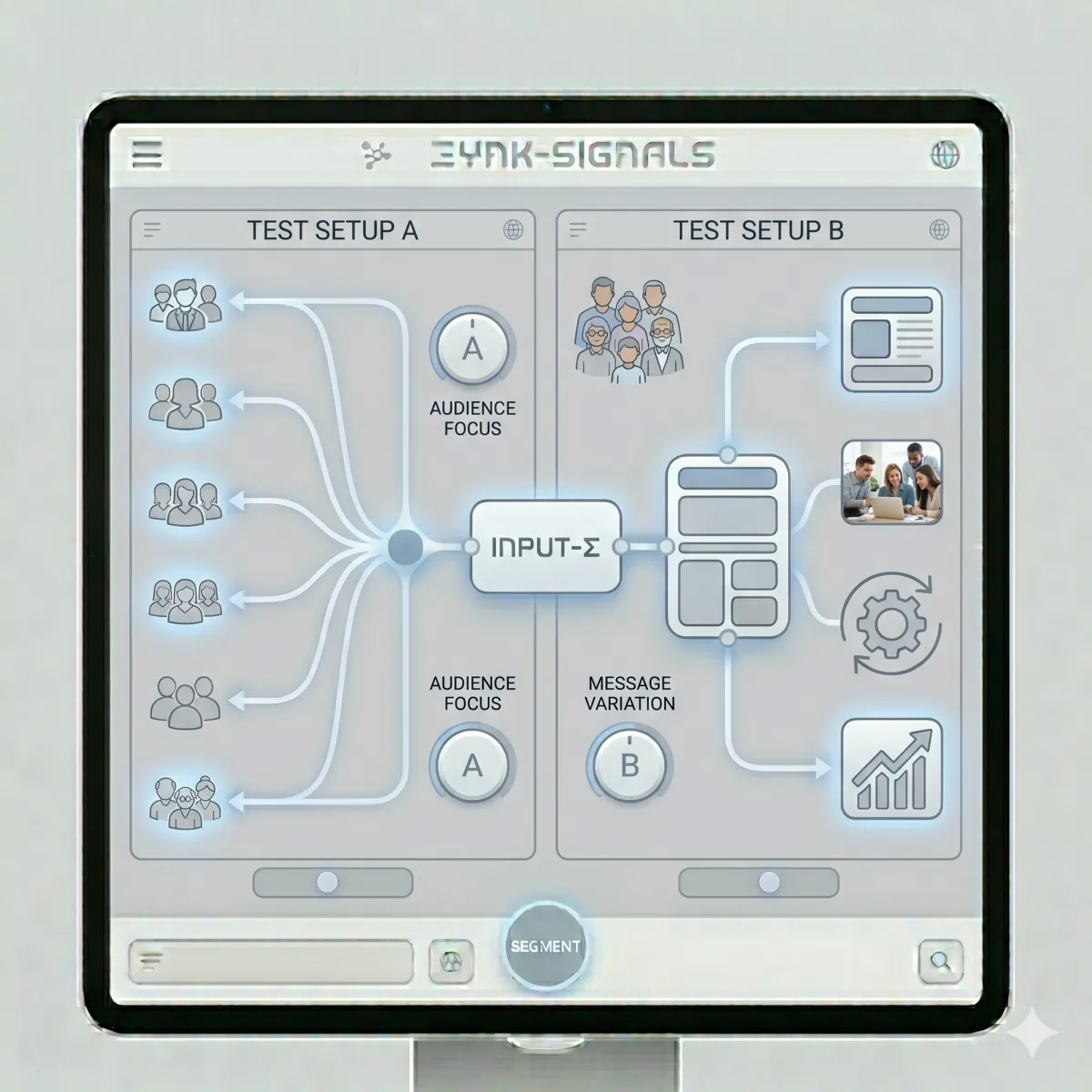

🧠 The simple test setup (copy this)

Here’s the clean setup for most businesses:

🧾 Test Setup A: Target audience test (WHO)

Same ad creative

Same headline

Same landing page

Same budget

Only change: audience

Run 7 days. Pick winner by CTR + bounce + conversion.

🧾 Test Setup B: Buyer persona test (WHAT)

Same audience

Same channel

Same budget

Only change: headline or proof or FAQ or CTA

Run 7 days. Pick winner by CTA clicks + conversion + lead quality.

🧭 Target audience test menu (easy options)

If we don’t know what to test first, we pick from this menu.

🧭 Target audience tests that work fast

Narrow vs broad (tight role vs wide role)

Urgent vs non-urgent (pain now vs pain later)

Local radius small vs large

One channel vs another (search vs social)

Cold audience vs retargeting

Keep the copy the same.

🗣️ Buyer persona test menu (easy options)

Once the audience is solid, we tune the message.

🗣️ Buyer persona tests that work fast

Pain headline vs proof headline

Add micro-FAQ under CTA vs none

“For/not-for” box vs none

Proof by CTA vs proof lower

CTA step size (guide vs call)

Add 48-hour win block vs none

Keep the audience the same.

🧩 Swipe templates: A/B test variants that don’t confuse buyers

Below are safe templates you can paste and test.

🪧 Headline variants (buyer persona)

Variant A (pain):

“Stop [pain] and get [result] without [fear].”

Variant B (proof):

“People like you got [result]. Here’s the simple path.”

🔘 CTA variants (buyer persona)

Variant A (small step): “Get the complimentary guide”

Variant B (bigger step): “Book a quick call”

❓ Micro-FAQ variants (buyer persona)

Variant A: micro-FAQ under CTA (4–6 Qs)

Variant B: no micro-FAQ

🧾 Proof variants (buyer persona)

Variant A: 1–3 reviews by CTA

Variant B: proof below the fold

📍 Placement rules that keep tests fair

Tests get messy if the page changes around the test.

So we keep placement consistent:

Hero stays in the hero

CTA stays in the same spot

Proof stays near CTA (unless proof placement is the test)

Form stays the same length (unless form is the test)

One variable. Everything else locked.

📱 Mobile testing rules (don’t ignore this)

Most traffic is mobile.

So if a test “wins” on desktop but loses on mobile, it’s not a real win.

📱 Mobile rules

buttons full width

proof above CTA on mobile

forms 3–5 fields max

big spacing

no tiny text

If the site is hard on mobile, no headline can save it.

🧠 How to use consumer behavior to pick your next test

If we don’t know what to test, we follow the chain:

Low CTR → test hook (pain vs proof) OR test audience slice

High bounce → fix promise match (ad to page)

Low time → fix clarity (shorter hero, steps)

Low CTA clicks → add proof by CTA + micro-FAQ

Low conversion → reduce friction (form, offer size)

Consumer behavior tells us what’s broken.

🧾 The test log (so we don’t repeat mistakes)

Every test needs a log. Simple.

✅ Test log template

Date

Test type: target audience or buyer persona

What changed (one line)

Why we tested it (which metric was weak)

Result after 7 days

Winner

Next test idea

This turns “marketing” into “learning.”

🧪 Mini examples (clean tests in real life)

🛠️ Example: Local service business

Target audience test:

Audience A: radius 5 miles

Audience B: radius 15 miles

Same ad and page. Winner had higher CTR and lower bounce.

Buyer persona test:

Same audience.

Tested pain headline vs proof headline. Winner had more CTA clicks and calls.

💻 Example: B2B software

Target audience test:

Audience A: ops lead titles

Audience B: finance lead titles

Same landing page. Ops clicked more and converted more.

Buyer persona test:

Same ops audience.

Tested “integrations proof by CTA” vs “integrations lower.” Proof-by-CTA won.

🛒 Example: Ecommerce

Target audience test:

cold interest targeting vs retargeting

Same ad message. Retargeting had better conversion.

Buyer persona test:

Same retargeting audience.

Tested “safe ingredients” proof vs “results” proof. Safe proof won.

No fake stats. Just clean logic and clean learning.

❓ FAQ — A/B Tests for Target Audience vs Buyer Persona

1) What is the difference between testing target audience and testing buyer persona?

Target audience tests change who sees the message. Buyer persona tests change what we say to that same group.

2) What should we test first: target audience or buyer persona?

Test target audience first. If the wrong people see the message, even great buyer persona copy won’t convert.

3) What target audience variables can we A/B test?

We can test audience slices, locations, channels, placements, devices, and retargeting vs cold traffic.

4) What buyer persona variables can we A/B test?

We can test headlines (pain/proof), proof placement, micro-FAQs, CTA wording, offer step size, and 48-hour win blocks.

5) What is the one-change-one-week rule?

It means we change one thing only, run it for one week, and pick a winner. This keeps tests honest.

6) What metrics show target audience fit?

CTR, bounce rate, and conversion rate together show if the target audience is right.

7) What metrics show buyer persona message fit?

Time on page, CTA clicks, conversion rate, and reply rate show if the buyer persona message feels safe and clear.

8) Why do A/B tests give bad results sometimes?

Because we change too many things at once or we mix target audience and buyer persona changes in the same test.

9) How do we pick the next test?

Follow consumer behavior signals: low CTR means hook/audience, high bounce means mismatch, low clicks means trust/FAQ, low conversion means friction.

10) How many tests should we run at the same time?

Start with one test at a time per page. More tests at once creates noise and confusion.

11) How do we know a test winner is real?

A real winner improves more than one signal (CTR + conversion, or clicks + close rate) and stays strong for another week.

12) What is the biggest goal of clean testing?

To build a buyer persona that converts and a target audience that is easy to reach, so we can scale with confidence.

📌 Key Takeaways

Target audience tests = WHO/WHERE (channels, targeting, placements)

Buyer persona tests = WHAT/WHY (headlines, proof, FAQs, CTAs)

Don’t mix WHO and WHAT in the same A/B test

Use the one-change-one-week rule

Track the right metrics for each layer

Use a test log to stack learning

Clean tests create buyer clarity inside The Buyer Clarity System™

🎁 Complimentary Ebook

Want our A/B test log sheet, buyer persona message templates, and the target audience channel matrix?

Grab our COMPLIMENTARY Buyer Clarity Guide here:

👉 Download your complimentary ebook now

🧭 Final Word

Stop changing everything.

Aim first (target audience).

Then speak (buyer persona).

Then test one thing at a time.

That’s how we get clean wins without guessing—inside The Buyer Clarity System™.